Dual space

In mathematics, any vector space, V, has a corresponding dual vector space (or just dual space for short) consisting of all linear functionals on V. Dual vector spaces defined on finite-dimensional vector spaces can be used for defining tensors. When applied to vector spaces of functions (which typically are infinite-dimensional), dual spaces are employed for defining and studying concepts like measures, distributions, and Hilbert spaces. Consequently, the dual space is an important concept in the study of functional analysis.

There are two types of dual spaces: the algebraic dual space, and the continuous dual space. The algebraic dual space is defined for all vector spaces. When defined for a topological vector space there is a subspace of this dual space, corresponding to continuous linear functionals, which constitutes a continuous dual space.

Contents |

Algebraic dual space

Given any vector space V over a field F, the dual space V* is defined as the set of all linear maps φ: V → F (linear functionals). The dual space V* itself becomes a vector space over F when equipped with the following addition and scalar multiplication:

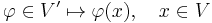

for all φ, ψ ∈ V*, x ∈ V, and a ∈ F. Elements of the algebraic dual space V* are sometimes called covectors or one-forms.

The pairing of a functional φ in the dual space V* and an element x of V is sometimes denoted by a bracket: φ(x) = [x, φ] [1] or φ(x) = ⟨x, φ⟩[2] to match the way the operation is performed i.e. [take a number, and perform this operation on it]. The pairing defines a nondegenerate bilinear mapping[3] [·,·] : V* × V → F.

Finite-dimensional case

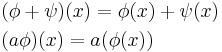

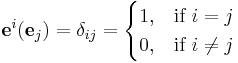

If V is finite-dimensional, then V* has the same dimension as V. Given a basis {e1, ..., en} in V, it is possible to construct a specific basis in V*, called the dual basis. This dual basis is a set {e1, ..., en} of linear functionals on V, defined by the relation

for any choice of coefficients ci ∈ F. In particular, letting in turn each one of those coefficients be equal to one and the other coefficients zero, gives the system of equations

where δij is the Kronecker delta symbol. For example if V is R2, and its basis chosen to be {e1 = (1, 0), e2 = (0, 1)}, then e1 and e2 are one-forms (functions which map a vector to a scalar) such that e1(e1) = 1, e1(e2) = 0, e2(e1) = 0, and e2(e2) = 1. (Note: The superscript here is the index, not an exponent).

In particular, if we interpret Rn as the space of columns of n real numbers, its dual space is typically written as the space of rows of n real numbers. Such a row acts on Rn as a linear functional by ordinary matrix multiplication.

If V consists of the space of geometrical vectors (arrows) in the plane, then the level curves of an element of V* form a family of parallel lines in V. So an element of V* can be intuitively thought of as a particular family of parallel lines covering the plane. To compute the value of a functional on a given vector, one needs only to determine which of the lines the vector lies on. Or, informally, one "counts" how many lines the vector crosses. More generally, if V is a vector space of any dimension, then the level sets of a linear functional in V* are parallel hyperplanes in V, and the action of a linear functional on a vector can be visualized in terms of these hyperplanes.[4]

Infinite-dimensional case

If V is not finite-dimensional but has a basis[5] eα indexed by an infinite set A, then the same construction as in the finite-dimensional case yields linearly independent elements eα (α ∈ A) of the dual space, but they will not form a basis.

Consider, for instance, the space R∞, whose elements are those sequences of real numbers which have only finitely many non-zero entries, which has a basis indexed by the natural numbers N: for i ∈ N, ei is the sequence which is zero apart from the ith term, which is one. The dual space of R∞ is RN, the space of all sequences of real numbers: such a sequence (an) is applied to an element (xn) of R∞ to give the number ∑anxn, which is a finite sum because there are only finitely many nonzero xn. The dimension of R∞ is countably infinite, whereas RN does not have a countable basis.

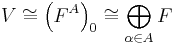

This observation generalizes to any[5] infinite-dimensional vector space V over any field F: a choice of basis {eα : α ∈ A} identifies V with the space (FA)0 of functions ƒ : A → F such that ƒα = ƒ(α) is nonzero for only finitely many α ∈ A, where such a function ƒ is identified with the vector

in V (the sum is finite by the assumption on ƒ, and any v ∈ V may be written in this way by the definition of the basis).

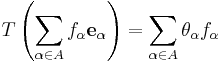

The dual space of V may then be identified with the space FA of all functions from A to F: a linear functional T on V is uniquely determined by the values θα = T(eα) it takes on the basis of V, and any function θ : A → F (with θ(α) = θα) defines a linear functional T on V by

Again the sum is finite because ƒα is nonzero for only finitely many α.

Note that (FA)0 may be identified (essentially by definition) with the direct sum of infinitely many copies of F (viewed as a 1-dimensional vector space over itself) indexed by A, i.e., there are linear isomorphisms

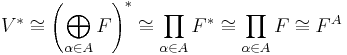

On the other hand FA is (again by definition), the direct product of infinitely many copies of F indexed by A, and so the identification

is a special case of a general result relating direct sums (of modules) to direct products.

Thus if the basis is infinite, then there are always more vectors in the dual space than in the original vector space. This is in marked contrast to the case of the continuous dual space, discussed below, which may be isomorphic to the original vector space even if the latter is infinite-dimensional.

Bilinear products and dual spaces

If V is finite-dimensional, then V is isomorphic to V*. But there is in general no natural isomorphism between these two spaces (MacLane & Birkhoff 1999, §VI.4). Any bilinear form ⟨•,•⟩ on V gives a mapping of V into its dual space via

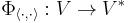

where the right hand side is defined as the functional on V taking each w ∈ V to <v,w>. In other words, the bilinear form determines a linear mapping

defined by

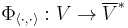

If the bilinear form is assumed to be nondegenerate, then this is an isomorphism onto a subspace of V*. If V is finite-dimensional, then this is an isomorphism onto all of V*. Conversely, any isomorphism Φ from V to a subspace of V* (resp., all of V*) defines a unique nondegenerate bilinear form ⟨•,•⟩Φ on V by

Thus there is a one-to-one correspondence between isomorphisms of V to subspaces of (resp., all of) V* and nondegenerate bilinear forms on V.

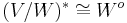

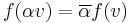

If the vector space V is over the complex field, then sometimes it is more natural to consider sesquilinear forms instead of bilinear forms. In that case, a given sesquilinear form ⟨•,•⟩ determines an isomorphism of V with the complex conjugate of the dual space

The conjugate space V* can be identified with the set of all additive complex-valued functionals ƒ : V → C such that

Injection into the double-dual

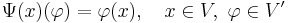

There is a natural homomorphism Ψ from V into the double dual V**, defined by (Ψ(v))(φ) = φ(v) for all v ∈ V, φ ∈ V*. This map Ψ is always injective;[5] it is an isomorphism if and only if V is finite-dimensional. (Infinite-dimensional Hilbert spaces are not a counterexample to this, as they are isomorphic to their continuous duals, not to their algebraic duals.)

Transpose of a linear map

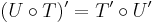

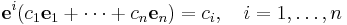

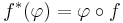

If ƒ : V → W is a linear map, then the transpose (or dual) ƒ* : W* → V* is defined by

for every φ ∈ W*. The resulting functional ƒ*(φ) is in V*, and is called as the pullback of φ along ƒ.

The following identity holds for all φ ∈ W* and v ∈ V:

where the bracket [•,•] on the left is the duality pairing of V with its dual space, and that on the right is the duality pairing of W with its dual. This identity characterizes the transpose,[6] and is formally similar to the definition of the adjoint.

The assignment ƒ ↦ ƒ* produces an injective linear map between the space of linear operators from V to W and the space of linear operators from W* to V*; this homomorphism is an isomorphism if and only if W is finite-dimensional. If V = W then the space of linear maps is actually an algebra under composition of maps, and the assignment is then an antihomomorphism of algebras, meaning that (ƒg)* = g*ƒ*. In the language of category theory, taking the dual of vector spaces and the transpose of linear maps is therefore a contravariant functor from the category of vector spaces over F to itself. Note that one can identify (ƒ*)* with ƒ using the natural injection into the double dual.

If the linear map ƒ is represented by the matrix A with respect to two bases of V and W, then ƒ* is represented by the transpose matrix AT with respect to the dual bases of W* and V*, hence the name. Alternatively, as ƒ is represented by A acting on the left on column vectors, ƒ* is represented by the same matrix acting on the right on row vectors. These points of view are related by the canonical inner product on Rn, which identifies the space of column vectors with the dual space of row vectors.

Quotient spaces and annihilators

Let S be a subset of V. The annihilator of S in V*, denoted here So, is the collection of linear functionals ƒ ∈ V* such that [ƒ, s] = 0 for all s ∈ S. That is, So consists of all linear functionals ƒ : V → F such that the restriction to S vanishes: ƒ|S = 0.

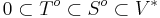

The annihilator of a subset is itself a vector space. In particular, ∅o = V* is all of V* (vacuously), whereas Vo = 0 is the zero subspace. Furthermore, the assignment of an annihilator to a subset of V reverses inclusions, so that if S ⊂ T ⊂ V, then

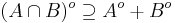

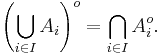

Moreover, if A and B are two subsets of V, then

and equality holds provided V is finite-dimensional. If Ai is any family of subsets of V indexed by i belonging to some index set I, then

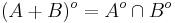

In particular if A and B are subspaces of V, it follows that

If V is finite-dimensional, and W is a vector subspace, then

after identifying W with its image in the second dual space under the double duality isomorphism V ≈ V**. Thus, in particular, forming the annihilator is a Galois connection on the lattice of subsets of a finite-dimensional vector space.

If W is a subspace of V then the quotient space V/W is a vector space in its own right, and so has a dual. By the first isomorphism theorem, a functional ƒ : V → F factors through V/W if and only if W is in the kernel of ƒ. There is thus an isomorphism

As a particular consequence, if V is a direct sum of two subspaces A and B, then V* is a direct sum of Ao and Bo.

Continuous dual space

When dealing with topological vector spaces, one is typically only interested in the continuous linear functionals from the space into the base field. This gives rise to the notion of the"continuous dual space" which is a linear subspace of the algebraic dual space V*, denoted V′. For any finite-dimensional normed vector space or topological vector space, such as Euclidean n-space, the continuous dual and the algebraic dual coincide. This is however false for any infinite-dimensional normed space, as shown by the example of discontinuous linear maps.

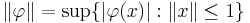

The continuous dual V′ of a normed vector space V (e.g., a Banach space or a Hilbert space) forms a normed vector space. A norm ||φ|| of a continuous linear functional on V is defined by

This turns the continuous dual into a normed vector space, indeed into a Banach space so long as the underlying field is complete, which is often included in the definition of the normed vector space. In other words, this dual of a normed space over a complete field is necessarily complete.

The continuous dual can be used to define a new topology on V, called the weak topology.

Examples

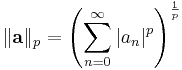

Let 1 < p < ∞ be a real number and consider the Banach space ℓ p of all sequences a = (an) for which

is finite. Define the number q by 1/p + 1/q = 1. Then the continuous dual of ℓ p is naturally identified with ℓ q: given an element φ ∈ (ℓ p)′, the corresponding element of ℓ q is the sequence (φ(en)) where en denotes the sequence whose n-th term is 1 and all others are zero. Conversely, given an element a = (an) ∈ ℓ q, the corresponding continuous linear functional φ on ℓ p is defined by φ(b) = ∑n anbn for all b = (bn) ∈ ℓ p (see Hölder's inequality).

In a similar manner, the continuous dual of ℓ 1 is naturally identified with ℓ ∞ (the space of bounded sequences). Furthermore, the continuous duals of the Banach spaces c (consisting of all convergent sequences, with the supremum norm) and c0 (the sequences converging to zero) are both naturally identified with ℓ 1.

By the Riesz representation theorem, the continuous dual of a Hilbert space is again a Hilbert space which is anti-isomorphic to the original space. This gives rise to the bra-ket notation used by physicists in the mathematical formulation of quantum mechanics.

Transpose of a continuous linear map

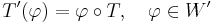

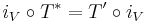

If T : V → W is a continuous linear map between two topological vector spaces, then the (continuous) transpose T′ : W′ → V′ is defined by the same formula as before:

The resulting functional T′(φ) is in V′. The assignment T → T′ produces a linear map between the space of continuous linear maps from V to W and the space of linear maps from W′ to V′. When T and U are composable continuous linear maps, then

When V and W are normed spaces, the norm of the transpose in L(W′, V′) is equal to that of T in L(V, W). Several properties of transposition depend upon the Hahn–Banach theorem. For example, the bounded linear map T has dense range if and only if the transpose T′ is injective.

When T is a compact linear map between two Banach spaces V and W, then the transpose T′ is compact. This can be proved using the Arzelà–Ascoli theorem.

When V is a Hilbert space, there is an antilinear isomorphism iV from V onto its continuous dual V′. For every bounded linear map T on V, the transpose and the adjoint operators are linked by

When T is a continuous linear map between two topological vector spaces V and W, then the transpose T′ is continuous when W′ and V′ are equipped with"compatible" topologies: for example when, for X = V and X = W, both duals X′ have the strong topology β(X′, X) of uniform convergence on bounded sets of X, or both have the weak-∗ topology σ(X′, X) of pointwise convergence on X. The transpose T′ is continuous from β(W′, W) to β(V′, V), or from σ(W′, W) to σ(V′, V).

Annihilators

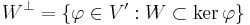

Assume that W is a closed linear subspace of a normed space V, and consider the annihilator of W in V′,

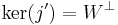

Then, the dual of the quotient V / W can be identified with W⊥, and the dual of W can be identified with the quotient V′ / W⊥.[7] Indeed, let P denote the canonical surjection from V onto the quotient V / W ; then, the transpose P′ is an isometric isomorphism from (V / W )′ into V′, with range equal to W⊥. If j denotes the injection map from W into V, then the kernel of the transpose j′ is the annihilator of W:

and it follows from the Hahn–Banach theorem that j′ induces an isometric isomorphism V′ / W⊥ → W′.

Further properties

If the dual of a normed space V is separable, then so is the space V itself. The converse is not true: for example the space ℓ 1 is separable, but its dual ℓ ∞ is not.

Double dual

In analogy with the case of the algebraic double dual, there is always a naturally defined continuous linear operator Ψ : V → V′′ from a normed space V into its continuous double dual V′′, defined by

As a consequence of the Hahn–Banach theorem, this map is in fact an isometry, meaning ||Ψ(x)|| = ||x|| for all x in V. Normed spaces for which the map Ψ is a bijection are called reflexive.

When V is a topological vector space, one can still define Ψ(x) by the same formula, for every x ∈ V, however several difficulties arise. First, when V is not locally convex, the continuous dual may be equal to {0} and the map Ψ trivial. However, if V is Hausdorff and locally convex, the map Ψ is injective from V to the algebraic dual V′* of the continuous dual, again as a consequence of the Hahn–Banach theorem.[8]

Second, even in the locally convex setting, several natural vector space topologies can be defined on the continuous dual V′, so that the continuous double dual V′′ is not uniquely defined as a set. Saying that Ψ maps from V to V′′, or in other words, that Ψ(x) is continuous on V′ for every x ∈ V, is a reasonable minimal requirement on the topology of V′, namely that the evaluation mappings

be continuous for the chosen topology on V′. Further, there is still a choice of a topology on V′′, and continuity of Ψ depends upon this choice. As a consequence, defining reflexivity in this framework is more involved than in the normed case.

See also

- Duality (mathematics)

- Duality (projective geometry)

- Pontryagin duality

- Reciprocal lattice – dual space basis, in crystallography

- Covariance and contravariance of vectors

Notes

- ^ Halmos (1974)

- ^ Misner, Thorne & Wheeler (1973)

- ^ In many areas, such as quantum mechanics, ⟨·,·⟩ is reserved for a sesquilinear form defined on V × V.

- ^ Misner, Thorne & Wheeler (1973, §2.5)

- ^ a b c Several assertions in this article require the axiom of choice for their justification. The axiom of choice is needed to show that an arbitrary vector space has a basis: in particular it is needed to show that RN has a basis. It is also needed to show that the dual of an infinite-dimensional vector space V is nonzero, and hence that the natural map from V to its double dual is injective.

- ^ Halmos (1974, §44)

- ^ Rudin (1991, chapter 4)

- ^ If V is locally convex but not Hausdorff, the kernel of Ψ is the smallest closed subspace containing {0}.

References

- Bourbaki, Nicolas (1989), Elements of mathematics, Algebra I, Springer-Verlag, ISBN 3-540-64243-9

- Halmos, Paul (1974), Finite-dimensional Vector Spaces, Springer, ISBN 0387900934

- Lang, Serge (2002), Algebra, Graduate Texts in Mathematics, 211 (Revised third ed.), New York: Springer-Verlag, ISBN 978-0-387-95385-4, MR1878556

- MacLane, Saunders; Birkhoff, Garrett (1999), Algebra (3rd ed.), AMS Chelsea Publishing, ISBN 0-8218-1646-2.

- Misner, Charles W.; Thorne, Kip S.; Wheeler, John A. (1973), Gravitation, W. H. Freeman, ISBN 0-7167-0344-0

- Rudin, Walter (1991), Functional analysis, McGraw-Hill Science, ISBN 978-0070542365

|

|||||

![[\Phi_{\langle\cdot,\cdot\rangle}(v),w] = \langle v, w\rangle](/2012-wikipedia_en_all_nopic_01_2012/I/6b4ae5b20185bef088bb4a7b07577361.png)

![\langle v,w \rangle_\Phi = (\Phi (v))(w) = [\Phi (v),w]](/2012-wikipedia_en_all_nopic_01_2012/I/7d1845c0e04e874060f672bcae38f8c0.png)

![[f^*(\varphi),\, v] = [\varphi,\, f(v)]](/2012-wikipedia_en_all_nopic_01_2012/I/2f0c6edc371e360c9e8c0776afa80870.png)